Every CTO and CIO we've spoken to this year has Model Context Protocol (MCP) on their roadmap. The protocol solves one problem elegantly. But it leaves a set of harder, more expensive problems intact, and as enterprise AI moves from AI-assisted workflows to autonomous agent deployments, those problems don’t stay the same size. They compound.

2026 is widely described as the inflection point: the year enterprise AI moves from proof-of-concept to organization-wide deployment. Boards are asking for a clear path forward, not just a pilot update. Budgets are shifting from exploration to execution. And every technical leader we speak with is under real pressure to show that their AI initiatives can deliver measurable outcomes beyond the demo stage. Indeed, MCP has experienced explosive adoption since Anthropic introduced it in late 2024.

For most organizations, that path runs through agents. And agents run on integrations. MCP, the open standard for connecting AI models to external tools and data sources, is now on virtually every enterprise AI roadmap. The protocol promises to standardize how AI agents connect to the systems your business runs on, whether that’s Salesforce, SAP, Slack, GitHub, or the dozens of other tools that hold your organization’s operational knowledge.

What’s notable, though, is how rarely technical leaders arrive asking for MCP by name. In our ongoing research with over 800 CIOs, CTOs, CAIOs, and engineering leads, the requests are almost always framed as outcomes: faster delivery, safer deployment, fewer vendor dependencies, better reuse of what their teams have already built. MCP is driving the underlying conversation. The real question underneath is whether connecting AI to more systems actually makes the organization smarter, faster, and safer, and whether it holds up as the use cases get more demanding.

The excitement around MCP is warranted. The protocol represents a genuine step forward for enterprise AI tools integration, and the ecosystem is moving quickly. But a gap is opening between what these integrations look like in demos, and what they require in production. That gap is worth understanding clearly before you commit significant in-house engineering resources to a build, and understanding now, before the agent-first future raises the stakes further.

There’s a framing worth introducing here that we’ll develop in detail later: what we call “Safe MCP.” The idea is to use the protocol where it genuinely excels – transactional, action-oriented, live data access – within a governed knowledge layer that addresses what the protocol alone cannot: search quality, context, security, and scale. That distinction matters today. It matters considerably more as enterprise AI becomes more autonomous.

This post is written for the technical and business leaders doing that homework: CTOs, CIOs, CAIOs, CDOs, and heads of engineering at scale-ups and established enterprises who’ve moved past “what is MCP for enterprise AI?” and are now asking harder questions. Can this scale across our organization without becoming a tangled web of point-to-point connections that multiplies with every new tool you add – growing harder to govern, observe, and maintain over time? Is MCP the end-all be-all for enterprise AI integration, or are there real costs, risks, and second-order effects we should understand before committing? What are the security implications for our data and our people? And what does the operational model actually look like a year after deployment, not just in the first sprint?

What Model Context Protocol Does, and Why Enterprise MCP Momentum Is Real

Model Context Protocol is a standard that defines how AI models connect to external tools and data sources. Before it existed, every AI integration was bespoke: custom code for every tool, in patterns that were fragile, hard to maintain, and impossible to share across teams. MCP addresses what engineers call the N×M integration problem, where N systems each requiring M separate integrations produces combinatorial complexity that quickly becomes unmanageable. By establishing a universal interface, MCP means any AI agent can connect to any compliant tool through the same structured protocol. One standard, any system.

For enterprise AI agent integration, the practical payoff is real. Agents that need to take action in external systems benefit directly from what MCP enables: creating a Jira ticket, running a SQL query against a production database, triggering a Salesforce workflow, querying live operational metrics from SAP. Authentication, tool discovery, and response formatting are standardized. Pre-built connectors for major enterprise tools are proliferating, and the major model providers have aligned on the standard.

That’s worth acknowledging clearly. This analysis isn’t arguing against MCP as a protocol. The standard does what it’s designed to do, and organizations building agentic AI workflows are right to be evaluating it. But what it doesn’t do is solve the harder problems that emerge after the initial connections are established. And those are the problems that determine whether an enterprise AI initiative performs in production, or stalls in the gap between a promising pilot and something you can reliably scale.

The Agent-First Future Changes the Calculation

Here’s the context that makes the limitations below more urgent than they might appear in an early-stage MCP evaluation: enterprise AI in 2026 is not staying in its current form.

Right now, most enterprise teams are evaluating MCP primarily as an integration layer for AI assistants and copilots: tools that help people retrieve information and take actions more efficiently. That’s an accurate description of where the market is today. It’s not an accurate description of where enterprise AI is heading over the next two to three years.

Gartner projects that by 2028, roughly a third of enterprise software applications will incorporate agentic AI, up from less than 1% in 2024. Autonomous agents that handle multi-step tasks without continuous human oversight are moving from research demonstrations to production roadmaps. In our own research, AI initiative leads at enterprise organizations described their near-term ambitions consistently: not just “AI that helps my team find answers faster,” but “AI that can execute workflows, coordinate across functions, and operate autonomously across the systems our business runs on.”

That shift changes the infrastructure calculus substantially.

When AI moves from “assist a person with a task” to “complete this workflow autonomously, across multiple systems, over multiple steps, potentially coordinating with other agents,” the requirements compound. Consider what multi-agent orchestration actually demands in practice: an orchestrating agent that spawns sub-agents, hands off context, and expects results back. Long-running workflows that persist across hours or days, not seconds. Cross-agent permission propagation, where the question “what can this sub-agent access?” requires a coherent answer that traces back through the chain. Audit trails that capture not just what a human did, but what a sequence of agent steps accessed, decided, and changed, and why.

In that world, the quality of your enterprise knowledge layer becomes the rate-limiting factor. How quickly can an agent (or a network of agents) understand your organization’s full context? How reliably can it find the right information across all the systems where knowledge lives, with the right permissions applied at every step? How does security hold when there’s no human in the loop to catch a misdirected query?

The infrastructure decisions you’re making right now will determine how much “agentic debt” you carry into that future: the accumulated cost of integration choices that looked adequate for today’s use cases but weren’t designed for the autonomy, scale, and governance demands of tomorrow’s workflows. Organizations that invest in a governed, unified knowledge layer now won’t have to retrofit security, permissions, search quality, and observability onto a fragmented connector fleet later at a time when those retrofits are far more expensive and disruptive.

The five limitations below are real today. In the multi-agent, agentic enterprise of the future, each of them only compounds.

The MCP Limitations That Don’t Show Up in the Demo

For enterprises moving from MCP pilot to production, the following model context protocol limitations tend to surface once real workloads are running against real systems. Understanding them before you’re deep in the build is the difference between architecture that scales and infrastructure debt that compounds.

1. Your Search Quality Problem Gets Multiplied, Not Solved

Here’s what most MCP documentation doesn’t address directly: the protocol provides AI models with access to each tool’s existing search index. It does not improve that search. It delegates to it.

This has significant implications for enterprise AI agent quality. Most enterprise tools have notoriously limited search capabilities. Slack’s results at scale are historically inconsistent at best. Confluence search frustrates users across virtually every organization that uses it at any significant depth. And SharePoint's search results are characteristically glitchy: recently uploaded content can take days to appear, draft documents often aren't indexed at all, and permission-dependent content surfaces unpredictably.

When you connect these tools via MCP, your AI agent inherits every one of those limitations. A query spanning four systems produces four independently ranked result sets, each reflecting whatever that tool’s native search engine surfaces, handed to the model without any unified relevancy layer. The quality ceiling for your enterprise AI is set by your weakest tool.

“If search lacks in Confluence or SharePoint, it will lack with MCP, and then compound that across a handful of tools you’re trying to use to get an answer or work done.”

This isn’t a limitation that will be patched as the MCP ecosystem matures. It is a structural consequence of the federated architecture. Combining mediocre results from many tools does not produce a good answer; it produces a louder mess. And every tool you add to the chain compounds the problem.

In an agentic future, this degrades further still. When agents chain together – one agent producing context that another reasons over – poor search quality at step one propagates through every subsequent step. An autonomous agent that starts from bad context doesn’t self-correct; it builds on that context and amplifies the error downstream.

2. Latency That Compounds With Every Source You Add

An agent can fan out queries to Slack, Google Drive, Jira, and Salesforce simultaneously, but the response is still only as fast as the slowest tool in that batch. More importantly, for complex questions, one round rarely suffices. When initial results don't contain what the agent needs, it reformulates and tries again. Those retries are sequential: each pass waits on a full set of responses before the agent decides what to try next.

In practice, multi-tool MCP queries take roughly a minute for typical enterprise question complexity. For agentic AI workflows that run dozens of queries per session, or for tasks requiring real-time response in a live meeting or customer interaction, that latency is disqualifying. You are, at best, only as fast as your slowest tool, and that compounds with every query, every session, every user.

There is also a direct cost dimension worth flagging for enterprise AI agent ROI calculations. MCP returns verbose output from multiple tool calls, and those responses flood the model’s context window with noise before synthesis even begins. At enterprise scale, token consumption from multi-source MCP architectures adds up materially.

For autonomous, long-running agentic workflows, the latency problem becomes structural. A workflow that executes twenty MCP queries in sequence – each waiting on the slowest tool – doesn’t finish in twenty minutes. It compounds. At the orchestration level, where one agent waits on results from sub-agents that are themselves running sequential MCP queries, the math is worse. Architectures that look barely acceptable in single-agent pilots become unusable at the multi-agent scale organizations are planning for.

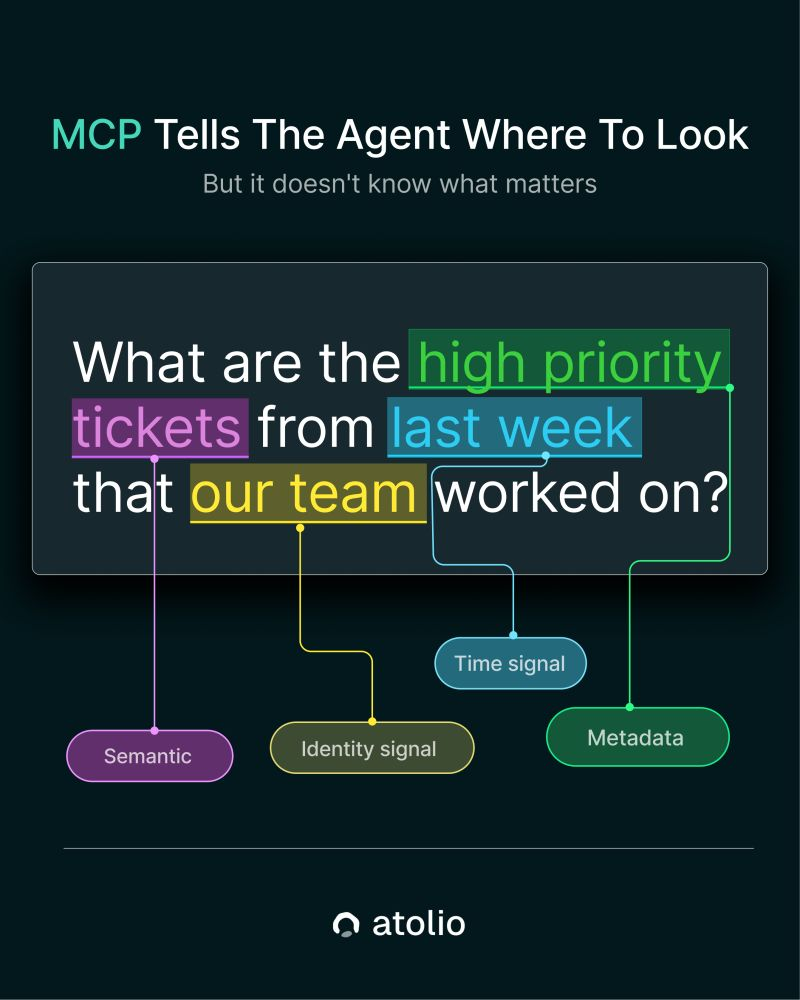

3. Context Fragmentation and the Identity Problem

MCP is purpose-built for independent, point-to-point connections: each tool handles its slice of a query in isolation, with no shared context layer across sources. This architecture works for lookup tasks where you know exactly which system holds the answer, and that answer lives entirely in one place.

Most real enterprise questions don’t work that way. Consider: “What are the high-priority tickets from last week that our team worked on?” Answering well requires identity signals (who is “our team”?), time signals, priority metadata from Jira, discussion context from Slack, and potentially related email. Each MCP server handles its slice of that query independently and returns whatever it can. No server sees across the others. No synthesis layer understands the relationship between what each returned.

For a typical enterprise question, the most relevant fragments of information are distributed across email, Slack, Confluence, Salesforce, and other systems simultaneously. An MCP-based architecture can direct the agent to each source. It cannot find the connections between those fragments, or weight them in the context of who’s asking, what they’re working on, or who they work with across systems. Without an identity and collaboration graph spanning all your tools, agents return results that are locally accurate and contextually incomplete. MCP only works well when information is neatly organized in a single system. In reality, it almost never is.

In a multi-agent world, context fragmentation becomes the core coordination problem. When an orchestrating agent hands off to a sub-agent, the sub-agent receives whatever context the orchestrator chose to pass, but it has no access to the broader organizational context the orchestrator may not have known to include. There is no shared memory layer. There is no understanding of the relationship between what different agents are doing simultaneously. Each agent starts from the context window it was given, and when that context is incomplete, multi-step autonomous workflows amplify the gaps rather than correct for them.

4. Security: A Critical Architecture Risk, Not a Configuration Setting

“Each new MCP connector is a new OAuth flow and a new potential injection point. The attack surface grows with every tool you connect.”

This is where CISOs, compliance leads, and security architects need to pay close attention, because it is consistently underweighted in how enterprise MCP adoption is discussed.

MCP-based enterprise deployments typically rely on individual OAuth flows per tool. Each integration requires its own authentication mechanism, and those tokens are exposed to the model at runtime. This creates the conditions for prompt injection attacks. Models cannot reliably separate data from instructions, and malicious content embedded in a GitHub PR comment, an email body, a Jira ticket, or any document the agent processes during a query can direct the model to leak credentials, exfiltrate data, or take unauthorized actions.

This is not a theoretical risk. Enterprise security teams are tracking it. They are under growing pressure to enable agent functionality anyway, and the gap between what security requires and what the business is pushing for is real.

From an MCP compliance and AI agent governance perspective, the picture compounds. Multi-connector architectures produce fragmented observability: different tools, different logs, different security models, no unified audit trail. Achieving a coherent view of what the agent accessed, when, and with what permissions requires aggregating evidence across systems that were not designed for that purpose. For organizations with MCP authentication enterprise requirements under SOC 2, HIPAA, or FedRAMP, this is an architectural problem. Enforcing consistent AI agent access control across a heterogeneous fleet of connectors, each with its own permission model and auth flow, is technically difficult and operationally demanding.

There is also a secondary risk that doesn’t always surface in technical conversations: employees composing prompts and queries may not understand the security implications of what their agent can access or what content it may process on their behalf. The blast radius of a misconfigured or compromised connector is not limited to the engineer who built it.

In an autonomous, multi-agent architecture, the security surface expands further. When an orchestrating agent delegates to a sub-agent, whose permissions apply? If a sub-agent is operating without a human actively monitoring each step, prompt injection in any document it processes can redirect the workflow without triggering any visible alert. When a long-running agentic workflow spans hours or days, the window in which a compromised step can cause damage before detection extends accordingly. The one-OAuth-token-per-tool permission model was not designed for agent chains that operate autonomously at scale.

5. Operational Ownership: What You Are Signing Up to Maintain

For most enterprise teams, the first MCP connections won't be custom-built: they'll be vendor-provided endpoints from tools already in your stack like GitHub, Google Workspace, Atlassian, Salesforce. That's the protocol's promise, and it largely holds. You don't have to build the connector; you connect to one the vendor has published.

What you do have to own is everything surrounding it. Security review, authentication management, monitoring, and the behavior of every agent that depends on that connection – those responsibilities land with your team, regardless of who built the endpoint. For internal tools, legacy systems, or anything that doesn't yet expose a native MCP implementation, someone is building from scratch, and the operational commitment starts earlier and runs deeper. But even in the vendor-provided case, connecting is the easy part.

Every connection in your fleet is a living dependency. Vendors update their MCP implementations on their own schedules. Rate limits change. Authentication flows evolve. An endpoint that works reliably in development may behave differently against real production volumes or after a vendor ships a breaking change. Someone must own it, triage failures, and respond when something breaks at an inconvenient time. At ten connections, this is a recurring responsibility. At thirty, it is a dedicated function.

For organizations with rigorous security postures or regulatory requirements, procurement and compliance cycles add a separate constraint. Getting a new MCP connection through security review, injection testing, and API approval in an enterprise environment can add one to three months per connector to your actual deployment timeline, vendor-provided or not. That figure rarely appears in early-stage planning.

In a multi-agent enterprise, this operational surface is no longer linear: it's multiplicative. A failure in one connection doesn't just degrade one agent's capabilities; it can cascade through any agent workflow that depends on that data source, silently returning incomplete context without surfacing a clear error. Diagnosing whether a degraded agentic workflow failed because of a connector issue, a permission change, an upstream implementation update, or an agent reasoning failure requires observability that most MCP deployments haven't built. The team that initially scoped the integration was solving for connecting agents to tools. The team that inherits the system at scale is solving for distributed failure modes across an autonomous system no individual person is watching.

What the DIY Build Path Actually Requires

Most teams reach for the build option because it appears faster. The protocol is open, tooling is accessible, and the first connector is running within a day or two. The real effort is everything surrounding the connector, and in aggregate, that effort looks like this:

What production-grade enterprise MCP infrastructure actually requires you to own:

- Reliability and compounding failure. Every MCP connection your agents depend on is another point of failure, and the failure modes compound. A slow tool increases latency for every query that touches it. A degraded endpoint returns lower-quality results that flood the context window and degrade synthesis. An unreachable service silently drops context rather than surfacing a clear error. Multiply this across a fleet of vendor-provided and custom connections, each with its own uptime profile, rate limits, and response quality, and issues in your agents' reliability ceiling are often invisible to users until something is meaningfully wrong.

- Authentication management, per tool. Each integration brings its own OAuth setup, token rotation policies, service account management, and credential storage requirements. Every new tool is a new auth surface. As connector count grows, so does exposure; the MCP OAuth enterprise security model is only as strong as its most neglected connector.

- Monitoring and alerting. There is no standardized observability layer across a fleet of MCP servers. You build the dashboards, define alert thresholds, and aggregate logs from disparate sources. Diagnosing whether a failure lives in the connector, the tool’s API, or the model requires correlating evidence across systems that don’t share a log format. This is the opposite of the “glass box” observability that AI governance requires.

- Permission schema integration. MCP connections typically inherit the permissions of the authenticating user or service account, which means an agent often gains access to everything that user can see, far broader than what the agent actually needs for its task. That overpermissioning is a quiet risk: it expands the blast radius of a compromised or misdirected agent well beyond what the use case requires. On the other end, if that user is deprovisioned, the connection breaks immediately, even if the workflow it powered is still useful to the team depending on it. Getting permission scope right, keeping it current as your organization evolves or as employee permissions change, and designing for continuity when individuals change roles or leave, is genuinely difficult across a heterogeneous fleet of tools, each with its own auth model.

- Version management. Slack modifies its API. Salesforce updates its schema. SAP deprecates an endpoint. A change in one upstream system can break a connector that was working fine the day before. Multiply this across every system you’ve integrated and you have a continuous maintenance function that demands engineering time, indefinitely.

- Security review and compliance cycles. In enterprise environments, new external API connections require security review, injection testing, and procurement approval. Teams report this process typically adding one to three months per connector to real deployment timelines, a cost that rarely surfaces in initial planning or vendor documentation.

Beyond the infrastructure and compliance layer, there is an experience dimension that is easy to underestimate during planning and felt clearly by the people actually using these systems once they are in production.

For employees and agents relying on these integrations in daily workflows, the reality of a production MCP fleet often looks like this: queries that should return in seconds take a minute, because the agent is waiting on the slowest tool in the chain. Answers that require context from multiple systems arrive in pieces, assembled from whatever each tool’s search happened to surface, with no unified sense of what’s most relevant to the person asking. The context window fills with verbose, partially relevant output from multiple tool calls, and the model’s synthesis degrades accordingly. A question about a customer account returns a Salesforce record, a Slack thread, and a Jira ticket, each accurate in isolation and none of them coherently connected into something the person asking can actually use.

These aren’t edge cases. They are the baseline user experience of sequential, federated queries against systems with inconsistent search quality. Because the friction is diffuse – spread across individual workflows rather than surfacing as a single system error – it often doesn’t register as a systemic problem until meaningful adoption has already occurred.

“The question isn’t whether you can build it. It’s whether maintaining this layer is the right use of your team’s time in years two and three.”

A Different Architectural Approach

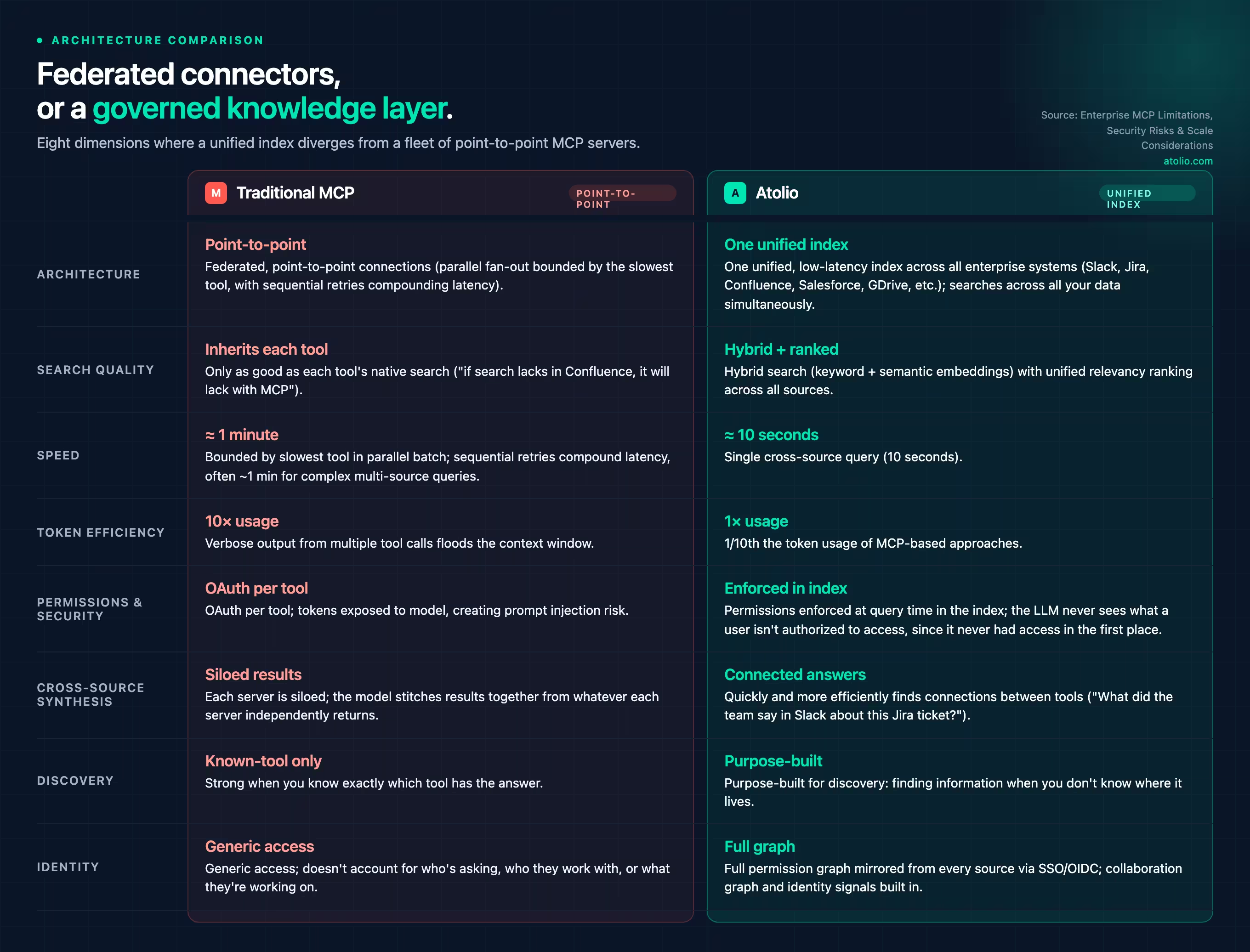

Atolio’s starting premise is a reframe worth sitting with: MCPs are a poor substitute for not having a high-quality organization-wide knowledge index that can be queried in milliseconds.

Rather than connecting an agent to your tools one at a time through protocol, Atolio builds and maintains a unified index across the systems where your company’s knowledge actually lives (Slack, Jira, Confluence, Google Drive, Salesforce, GitHub, and more) and exposes that index to AI through a single, governed, permission-aware layer. The agent doesn’t query Slack, then Jira, then Confluence. It queries one index that already understands all of them, returning results ranked by relevance to that specific user given their role, their team, and the signals of what they’re actually working on.

The architectural difference produces outcomes that matter across several dimensions.

Atolio vs. Traditional MCP: Architecture Comparison

The security model warrants a closer look. Atolio deploys entirely inside your own private cloud environment (AWS, Azure, GCP, or other VPC) or fully on-prem, and mirrors object-level ACLs from every source system in real time. User identity is mapped through your central SSO/OIDC provider across all connected tools, so the same access model that governs your systems governs what the AI can see. Permissions are enforced at query time, directly in the index. The LLM only ever sees what that specific user is authorized to access. This is not filtering after retrieval; unauthorized content was never accessed in the first place.

Because ACLs are tracked in near real-time across all connectors, an employee who loses access to a system loses that access in Atolio’s index at the same time, not at the next batch sync. For organizations with compliance requirements under SOC 2, HIPAA, or comparable frameworks, this is the kind of real-time access control and audit trail that enterprise AI agent governance actually requires in practice.

On performance: the reason Atolio returns cross-source results in roughly 10 seconds versus a minute for comparable MCP queries is not clever caching. A purpose-built, low-latency index designed to search across all your data simultaneously is structurally faster than sequential federated API calls bounded by the slowest tool’s response time. And because Atolio applies unified relevancy ranking across all sources through its own index – rather than passing queries to each tool and stitching back whatever they return – the results reflect what’s actually most relevant, not whatever each tool’s individual search engine happened to surface.

The cross-source synthesis capability is where the difference becomes most visible in day-to-day enterprise use. A question about a complex customer account requires context from Salesforce (account history), Slack (recent team discussion), Jira (open tickets), and email (recent correspondence). In a traditional MCP architecture, that’s four sequential queries across four separate auth contexts, producing four result sets the model has to reason over without understanding their relationship. With Atolio, it’s one query, returning the most relevant fragments across all sources, ranked by what matters to this specific user at this moment.

It’s important to be precise about what Atolio’s approach is not: an argument against MCP as a protocol. Atolio uses MCP in two specific roles. As an MCP client, it calls out to structured, transactional systems at runtime – databases, live operational data, SQL queries – for cases where indexing billions of rows isn’t appropriate and live data is essential. As an MCP server, Atolio exposes its knowledge base to external agents, so a customer support agent, coding assistant, or any third-party AI tool can connect to Atolio’s governed, permission-aware index rather than wiring up a dozen individual system integrations. For enterprise organizations evaluating multi-agent orchestration, this means agents get one clean, permissioned connection to everything your organization knows, rather than a brittle collection of point-to-point connectors.

This is what we mean by “Safe MCP”: extending Atolio’s long-standing security posture (your data is secure because you control it) into the agentic era. Rather than exposing raw OAuth tokens to autonomous agents, or relying on each tool’s permission model to hold when there’s no human actively watching, the agent connects once to a governed layer that already knows what your organization knows – and already knows who is allowed to access what. In a multi-agent world, this matters even more: whether the agent is a copilot running alongside a human or an autonomous worker executing tasks independently, the security and governance model doesn’t change. The governed layer handles it at every step.

How to Think About Your Path Forward

The choice between a DIY MCP strategy and a unified knowledge layer isn’t binary, and the right answer depends on what problem you’re primarily trying to solve. A few questions worth working through honestly before committing:

Is the primary use case knowledge retrieval and discovery, or taking action in external tools? MCP is well-suited to agentic, write-oriented workflows: an agent that creates tickets, triggers processes, queries live structured data, or takes action in external systems. For that use case, the protocol does what it’s designed to do. Where the calculus shifts is when the primary need is AI that understands your organization’s knowledge: what your people have discussed, decided, documented, and learned across all the tools where that knowledge lives. For that problem, the federated, tool-by-tool approach introduces constraints that compound at scale.

How many tools hold relevant knowledge, and how often does a real answer require synthesizing across more than one? If most meaningful questions span three or more systems, the latency and quality constraints of sequential MCP queries will become visible in production quickly. They’ll show up as slow responses, incomplete answers, and agents that feel useful in demos but frustrating in daily use.

What does your security and compliance posture actually require? If consistent permission inheritance across connected systems, meaningful audit trails, protection against prompt injection, and data residency controls are non-negotiable – and in most enterprise environments they are – those requirements need to be modeled into the architecture before you start connecting systems, not retrofitted after.

What is your team’s realistic capacity to own the ongoing maintenance of this layer? The honest answer to this question usually determines whether the build path is sustainable at the intended scale. It’s one thing to build ten connectors; it’s another to keep them current, secure, and observable across a production environment that changes continuously.

And ultimately: what are you actually optimizing for? This is the clarifying question worth returning to before any architecture commitment. If the goal is to help your people and the AI agents they’ll eventually rely on move faster across all the internal knowledge that helps them work more effectively, ask whether a sequential, federated, quality-variable approach actually delivers that outcome, shiny new protocol aside. An architecture that compounds search limitations across tools, constrains agents to wait on the slowest system in the chain, returns context that doesn’t account for who’s asking or what they’re working on, and introduces security exposure with every new connector may check the “we have enterprise AI agents” box while failing the practical test. Does this actually make our organization faster, smarter, and more capable? That’s the standard worth holding.

And looking further ahead: what does this infrastructure need to look like when your agents are autonomous? The organizations making these choices now are not just building for today’s AI assistant use cases. They’re building the foundation for autonomous agents that will operate across their entire stack. The question worth asking now is: when our agents are running multi-step workflows independently, coordinating with each other, accessing sensitive systems without a human in the loop at every step: what guarantees do we have that the underlying knowledge layer is accurate, permissioned, governed, and secure? That question has a much lower cost to answer before the architecture is set than after.

The Deployment Gap

There’s a framing that captures the MCP moment well: the difference between a promising demo and something you can reliably deploy at enterprise scale.

The demos are compelling, because the protocol delivers on its premise. Agents can connect to tools, surface information, and even take action. The production gap opens in what the demos don’t show: the search quality of the underlying systems, the latency of sequential queries across real enterprise data, the data exfiltration risk that emerges when prompt injection can orchestrate multiple connected tools in combination, and the operational commitment of maintaining a connector fleet as APIs and permissions evolve. The multi-tool risk is subtle but real: an agent with access to a file store and a messaging tool can be manipulated to read sensitive content from one and route it through the other, no credential theft required.

As enterprise AI moves toward the autonomous, multi-agent architectures that are already appearing on near-term roadmaps, those gaps don’t diminish. They become more consequential. The organizations that build for that future now – with a governed knowledge layer that handles security, permissions, search quality, and observability from the start – won’t be retrofitting those capabilities onto a fragmented connector fleet when the stakes are higher and the agents are operating without a human at every step.

For enterprise leaders building in this space, the most important question isn’t “do we use MCPs?” It’s the follow-up that matters: once everything is connected, what guarantees do you have that what comes back is relevant, permissioned, current, and actually helpful? Not just today, when a person is in the loop, but tomorrow, when the agent is running on its own.

That’s the layer worth investing in.

If you’re evaluating how to give AI reliable access to your company’s knowledge – not tool by tool, but across everything your organization has built, discussed, and documented – we’d be glad to show you how Atolio approaches it.

See how Atolio's unified knowledge layer works, or book time with our team to discuss further.

Frequently Asked Questions

1. What is agentic debt?

Agentic debt is the accumulated cost of AI infrastructure decisions that work adequately for today's use cases but weren't designed for the governance, scale, and autonomy demands of multi-agent workflows. Like technical debt, it compounds: each MCP connector added without a unified permission model, audit layer, or shared identity framework becomes expensive to retrofit when autonomous agent deployments arrive.

2. What is Model Context Protocol (MCP)?

Model Context Protocol (MCP) is an open standard that defines how AI models connect to external tools and data sources through a consistent interface. It solves the N×M integration problem. Before MCP, every AI-to-tool connection required custom code. With MCP, any compliant agent connects to any compliant tool through the same protocol, with authentication and response formatting handled consistently.

3. What is Safe MCP?

Safe MCP is an approach to using Model Context Protocol within a governed, permission-aware knowledge layer like Atolio’s rather than as a standalone integration strategy. Instead of wiring agents directly to each tool's OAuth flow – which fragments access control and exposes each connection as a potential injection surface – Safe MCP routes retrieval through a unified index that enforces access controls before content reaches the model, so security and governance hold whether agents are human-supervised or fully autonomous.

4. How do MCPs compare to APIs?

Traditional APIs are designed for developers: structured endpoints, versioned schemas, integration code written by humans. MCP servers are designed for AI agents: tools and data exposed in a format agents can discover and invoke autonomously, using a standardized protocol regardless of the underlying system. MCP reduces per-tool integration cost significantly. What it doesn't standardize is search quality, security governance, or observability of agent behavior.

5. How does MCP relate to RAG?

MCP-based retrieval is a form of RAG (Retrieval-Augmented Generation), but with a structural difference: it delegates search to each tool's native index rather than a purpose-built retrieval layer. Standard RAG uses a unified index with consistent relevancy ranking across sources. MCP inherits each tool's search quality, which means results across tools aren't ranked against each other and the quality ceiling is set by the weakest tool.

6. What is the N×M integration problem in enterprise AI?

The N×M integration problem describes the combinatorial complexity of connecting N enterprise systems to M AI tools: without a standard, each pair requires a unique custom integration. At 20 tools and 10 AI models, that's up to 200 bespoke connections to build and maintain. MCP reduces this to N+M components by establishing a universal interface: one server per system, one client per agent.

7. How long does it take to build an enterprise MCP integration?

The first connector often goes live in a day or two. The surrounding work – security review, injection testing, procurement approval, auth management, and monitoring setup – typically takes one to three months per connector in enterprise environments. That figure doesn't include the ongoing maintenance burden once the connector is in production.

8. What are the biggest security risks of MCP in enterprise?

The three primary risks are prompt injection (malicious content in any processed document can redirect model behavior), fragmented access control (each connector carries its own permission model with no unified enforcement layer), and limited observability (no unified audit trail across tools, making compliance reporting under SOC 2, HIPAA, or FedRAMP structurally difficult).

9. Can MCP support multi-agent orchestration in enterprise?

MCP enables individual agent-to-tool connections but doesn't natively support what multi-agent orchestration requires: shared context across agents, defined permission propagation through agent chains, and cross-agent observability. Sequential MCP queries also compound latency across workflow steps, making long-running autonomous workflows impractical at scale without a unified knowledge layer handling retrieval and governance.